There is a whole series of articles and pages on the subject of OpenAPI on the net. So why should I write another one? Quite simple. Many of these articles are aimed at (experienced) web developers. By comparison what about all those who have so far dealt with EDI or the exchange of electronic business messages? This short introduction is for you.

APIs for the exchange of business documents

An API as a link between two systems or software components is nothing new. But its application for the exchange of business documents is. For this requirement, solutions for the electronic exchange of business data have existed for many decades. Examples are the classic EDI based on EDIFACT or the exchange of XML files. The latter is becoming more widespread, especially in the wake of the mandatory introduction of electronic invoices for public buyers.

But it is precisely here that the problems of previous solutions become apparent. In principle, classic document exchange is nothing else than the digital replica of the paper world. So basically, hardly anything has changed in the last 100 years in terms of the basic principle. Only the transmission medium has changed: from paper to one of many electronic formats. This worked well as long as the documents to be transmitted were only exchanged between two partners. For example, an invoice from the seller to the buyer.

However, due to advancing digitalisation combined with globalisation, requirements are increasing. Often not only two but more partners are interested in information interchange. For example, if goods are to be transported across borders. Then, on top of the classic partners, add the importing and exporting customs authorities, the transport company and possibly other partners. Consequently, this is a very heterogeneous world in terms of technology. And at the same time, the demand for transparency in the supply chain is increasing. And the demand for better detection and prevention of product piracy and counterfeiting.

This is hardly feasible with classic EDI

But why is this so difficult to implement with classic EDI? Certainly, the fact that there is not the one and only “EDI” but many differing standards for the exchange of business documents plays an important role. In many cases, industry requirements or the requirements of individual organisations add a burden. This often dilutes a standard to such an extent that there can be many hundreds of variants of a single message. In addition, the fact that the requirements for “business documents” are to be fulfilled is a further complicating factor.

And come to think of it – these requirements come from the paper world. A world where people reconcile the received (paper) document with the books (accounting). These documents also contain a lot of information that could actually be superfluous in an electronic process.

For example, a customs authority does not need to know the complete contractual relationships including delivery terms or agreed conditions. Classically, this in turn creates new documents and new (EDI) messages. Or the persons or systems involved do not (yet) have uniform electronic access to the information. Today, large suppliers and their customers announce deliveries electronically with the despatch advice message. And yet a delivery note or goods accompanying note is also printed out and attached to the consignment.

For a smooth EDI implementation, the processes of the individual organisations along the value chains must also be semantically interlinked. So the management systems of the organisations must also be able to deal with such electronic messages. And be always on the same level. This is difficult to achieve in practice. Standards help, but implementation is often too difficult and too expensive.

Who might cloud my data?

There is yet another aspect to this. A study from 2020 showed that one of the biggest obstacles is the fear of losing control over one’s own data through central data exchange platforms.

Ensuring data sovereignty is therefore an essential aspect when new platforms are to be created in the business-to-business sector.

REST APIs offer a clear approach here to move away from business documents for data exchange. Instead, only the information that is actually needed is exchanged. With clearly defined partners. This ensures, for example, that no agreed prices get into the wrong hands via a supplier portal.

Separation of data structures and services

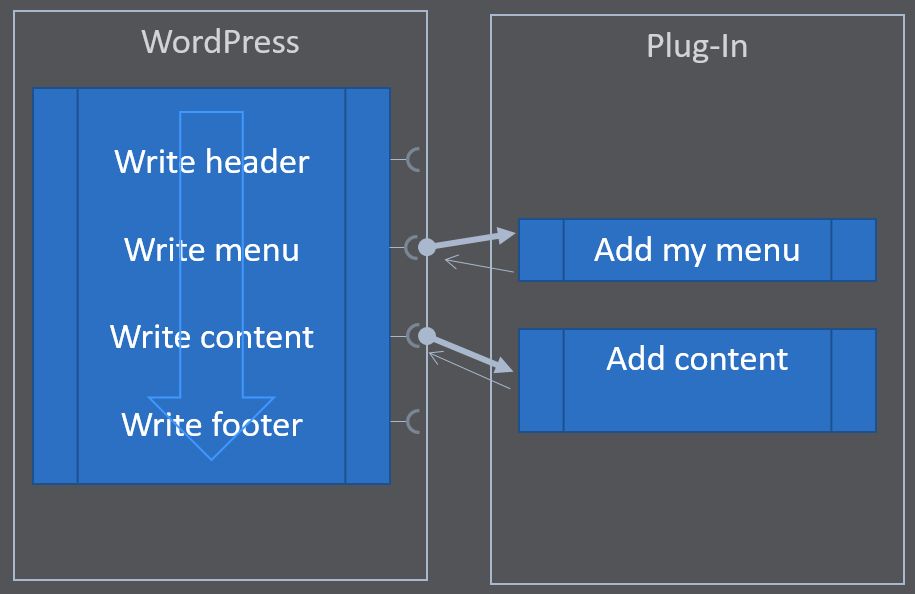

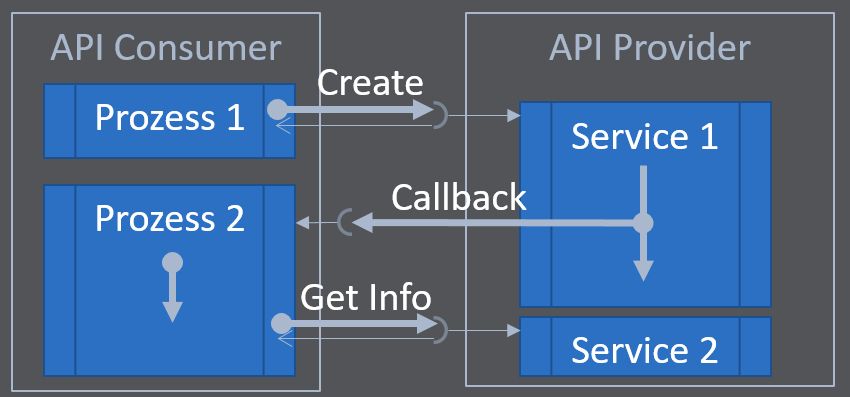

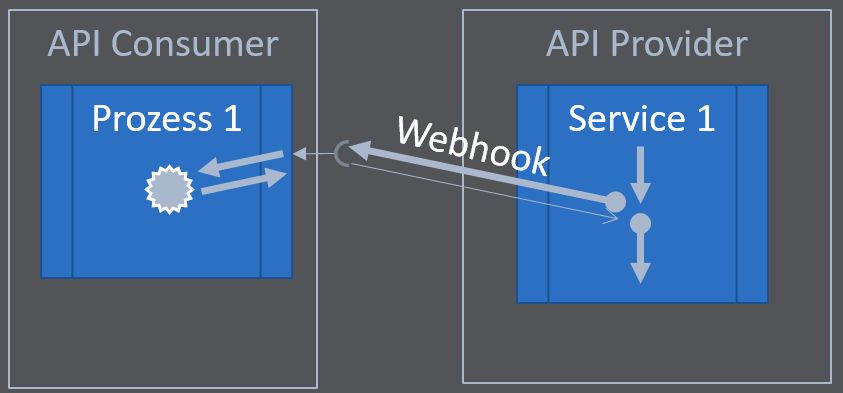

A classic EDI scenario essentially comprises only two services. The conversion of data from a source system into the data format of the target system and the forwarding of the data to the target system. Of course, there can be more complex scenarios with feedback that works similar to registered mail with return receipt. But anything beyond that is actually no longer part of the actual EDI scenario. The processes themselves have to be provided by the respective end systems. For example, an order confirmation for an order is created by the seller’s system. The EDI solution then transmits the full content back again. So again with the same services, but using a different message.

In the world of APIs, the service concept goes beyond this. An API can actively support individual process steps. Or it can also provide real added value, such as the provision of information. Whereby the API user can determine which filter criteria should be applied. This is hardly conceivable in the classic EDI world.

These possibilities lead to the fact that not only the data structures are defined in an API, but also the services. These then use the defined data structures to process incoming information or to provide outgoing information.

Won’t APIs make everything more complicated?

Phew, that sounds pretty complicated. And if more is added now, won’t it become even more confusing? And someone still has to implement it!

This is exactly where OpenAPI comes in. Precisely not to develop proprietary rules, but to standardise the specification of an API. Understanding this difference is immensely important. So it’s not about how an API is implemented. No, it’s about what exactly it should do. What services it offers and what data structures it supports.

As described above, there are many standards in the EDI environment, including international ones. The United Nations Centre for Trade Facilitation and Electronic Business Processes UN/CEFACT has been standardising the meanings of data structures in business documents for many years. UN/CEFACT publishes semantic reference data models, which are used for guidance by many organisations and industries worldwide.

About meanings and twins

REST APIs have been used on the internet for many years. Especially for providing services for other websites or on mobile devices. Examples are APIs for currency conversion or the various map APIs, with which one can easily navigate from A to B. The semantic web also plays an important role. The systems of online shops, search engines and social networks should be able to recognise semantic connections. For example, which ingredients a recipe requires, how long it takes to prepare, and which shops offer these ingredients at which prices.

The standards on schema.org provide an essential basis for this. All these services are guided by these clear definitions and make it possible to map so-called digital twins. Everything identifiable in the real world can also be mapped digitally: People, events, houses, licences, software, products and services, to name but a few. And the whole thing is understandable for people and processable by machines.

No wonder that many have asked how this can be transferred to the business-to-business level, including UN/CEFACT. How do the achievements of the last decades remain with web APIs?

OpenAPI – A specification standard for APIs

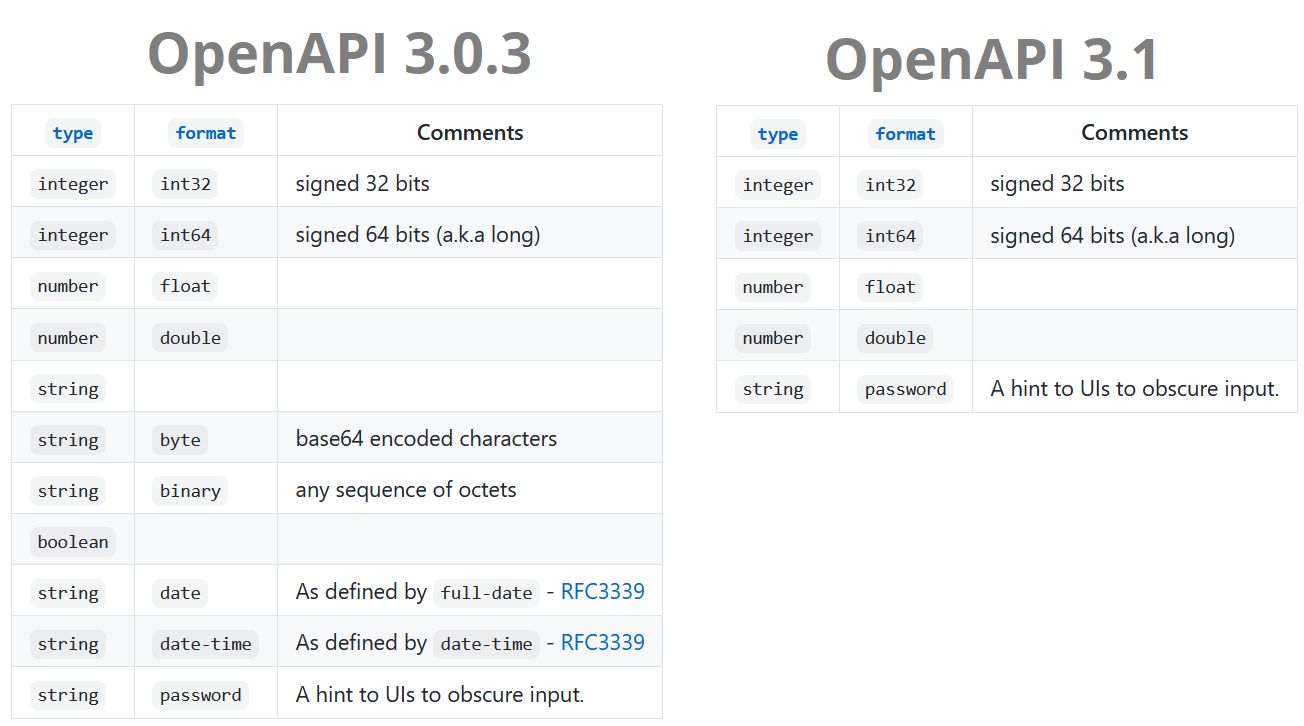

The OpenAPI specification standard thus defines a set of rules on how APIs are to be specified. Which services are provided? What data structures are needed? What are the requirements for an implementation? And all of this in a version that can be read by the developer, but also by a machine.

This is precisely where the real strength of this standard lies. The very large support through a variety of tools and programming environments. This makes it possible to define an API at the business level. With all its services and data structures. It can be described simultaneously so that a developer can implement it as intuitively as possible.

And the developer is massively supported in this. Since the specification is machine-readable, tools exist that generate source code directly from the specification. This ensures that the specified properties of the interface itself are implemented correctly: The names of endpoints (services) are correct. The data structures used by these are implemented correctly and all return values are also clearly defined.

Of course, the developer still has to implement the server or client side. If he is clever about it, this implementation can even be secure against future changes. An update of the specification can be directly incorporated into the source code and only requires minor adjustments.

Who defines OpenAPI?

OpenAPI has its origins in Swagger. As early as 2010, the manufacturer of Swagger, the company SmartBear, recognised that an API specification can only be successful if it is developed openly and collaboratively (community-driven). That is why they transferred the rights to the OpenAPI Initiative. Big names such as Google, Microsoft, SAP, Bloomberg and IBM belong to this community. This community is very active and constantly developing the specification. The most recent release during this writing is OpenAPI 3.1.

So since 2016 at the latest, Swagger has only stood for the tools created by the company SmartBear. The specifications created with these tools are usually OpenAPI specifications. However, these tools also still supporting the old predecessor formats, especially the Swagger 2.0 format.

Use and spread of OpenAPI

TMForum regularly conducts studies on the spread of OpenAPI. The latest study shows a significant increase in adoptions. Increasingly, companies are re-dressing their existing APIs in OpenAPI specifications as soon as these are used for cross-company data exchange. According to the study, the market is divided into two camps in particular: Clear advocates of OpenAPI and those organisations that want to increasingly take care of OpenAPI in the future.

In the presentation of OpenAPI 3.1, Darrel Miller from Microsoft explained that there are still many implementations with RAML. However, the trend shows that RAML is found more in in-house solutions. OpenAPI increasingly forms the basis for cross-company scenarios.

Code-First (Swagger) or Model-First (GEFEG.FX) to OpenAPI?

A major difference in the tools currently available on the market is whether putting the focus is on source code development, or model-based development. The Swagger editor is a typical example of a code-first application. In an editor, the API developer can directly capture and document his API specification. This includes both the service structures and the data structures. In addition to this machine-readable format, he also immediately sees the prepared documentation for another developer. The documentation is then available in a developer portal, for example.

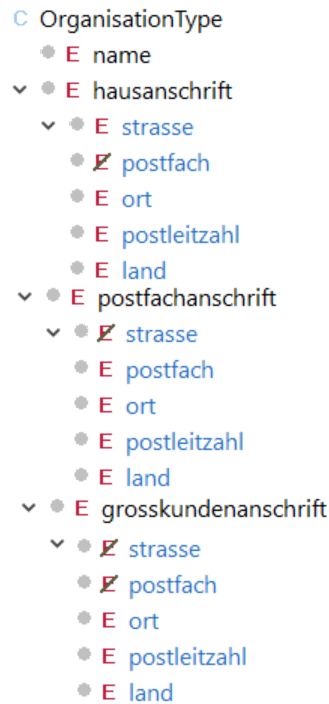

In contrast, the GEFEG.FX solution follows a model-driven approach. The focus here is not on the technical developer, but on the business user. He is responsible for the business view and the processes in the organisation or among organisations. He is often familiar with the existing EDI implementations, or at least knows the (economic and legal) requirements for the processes to be implemented. With this knowledge, he is able to use the existing semantic reference models and standards in the API specification. The wheel is not reinvented every time. . If such a standard changes, GEFEG.FX simply incorporates the change into the API specification. At the same time, governance requirements are implemented smartly without restricting the individual departments too much. For this, it does not matter whether it is the implementation of the electronic invoice, the electronic delivery note, EDI, the consumer goods industry, the automotive industry, the utilities sector, UN/CEFACT, or others.

My recommendation

The code-first approach is perfect for web developers. Business users, however, are overwhelmed by it. Therefore, I give a clear recommendation to all those with a focus on EDI or the exchange of electronic business messages: Plan OpenAPI as a model-first approach. This is future-proof, extensible and customisable.